Large Language Models (LLMs) have transformed how organisations approach translation. With minimal setup, businesses can generate multilingual content at speed and scale. For exploratory use or low-risk material, generic LLM-based translation can be sufficient.

However, when translation becomes operational, integrated into websites, product documentation, regulatory communications, or brand-driven content — limitations begin to surface.

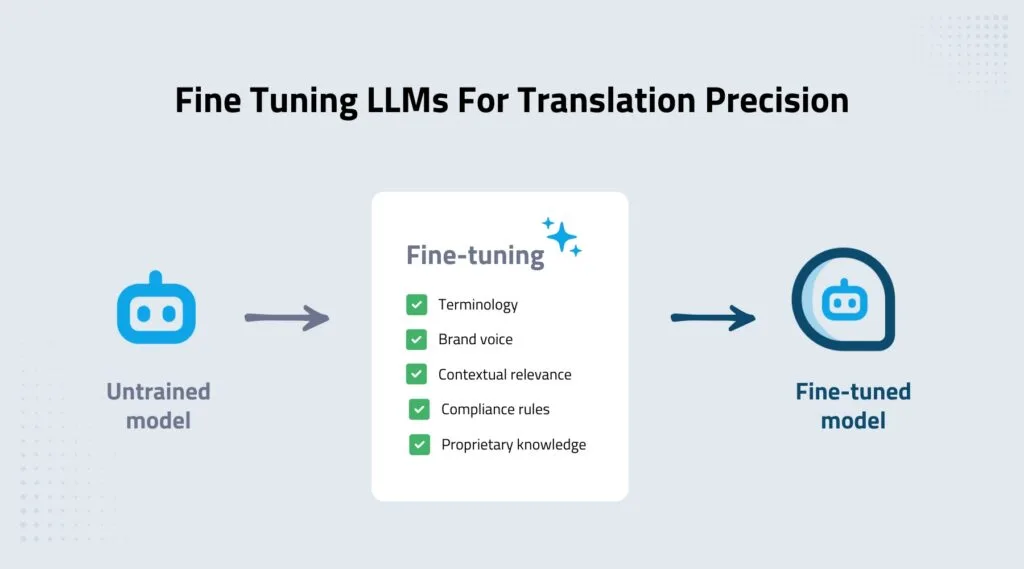

This is where LLM fine-tuning for translation becomes critical.

Rather than relying on broadly trained models designed for general language tasks, fine-tuned LLMs are adapted using domain-specific data, approved terminology, and structured linguistic standards. The result is not simply faster translation, but more accurate and consistent output aligned with real business requirements.

How LLM fine-tuning for translation works

LLM fine-tuning adjusts a base large language model using curated datasets so that it internalises how language should be handled in a defined context. For translation, this typically includes:

- Validated terminology

- Industry-specific phrasing

- Brand voice conventions

- Approved bilingual reference material

- Quality standards defined by subject matter experts

Unlike surface-level configuration through prompts, fine-tuning modifies the model’s underlying behaviour. It learns preferred linguistic structures instead of being repeatedly instructed to follow them.

This adjustment reduces output variability, particularly when translation volumes increase or when multiple teams rely on the same system.

Why generic LLM translation struggles at scale

Generic LLMs are trained on extensive and diverse datasets. They offer flexibility and fluency, but often lack the precision required for enterprise translation.

In business translation, precision matters. Terminology must remain stable across documents, markets, and time. Even small inconsistencies can create confusion, compliance exposure, or brand dilution.

As content volume grows, reliance on manual correction increases. The efficiency gains initially associated with AI translation are gradually offset by review cycles and post-editing effort.

LLM fine-tuning for translation addresses this by embedding preferred terminology and linguistic expectations directly into the model. Instead of reacting to instructions, the system behaves predictably within defined translation parameters.

How fine-tuned LLMs improve translation accuracy

Translation accuracy in enterprise environments extends beyond grammatical correctness. It includes:

- Terminology consistency

- Context preservation

- Alignment with industry usage

- Stable tone across markets

- Repeatable output across teams

Fine-tuned LLMs improve these areas because they are trained on relevant production material rather than relying solely on general data.

When implemented correctly, fine-tuning reduces downstream correction and increases confidence in first-pass outputs. Review processes shift from reactive editing to structured oversight.

Moving from generic LLMs to custom translation models

As organisations move beyond experimentation and integrate AI into production workflows, reliability becomes more important than novelty.

Generic LLM translation demonstrates what is possible.

Fine-tuned LLM translation determines what is dependable.

At scale, the difference becomes measurable: reduced variability, fewer review cycles, and stronger alignment with defined language standards.

For organisations seeking a structured approach to LLM fine-tuning for translation, our Custom AI Translation service provides a framework for adapting models using validated terminology, reference content, and defined quality benchmarks rather than relying solely on generic training data.